|

An explainable deep machine vision framework for plant stress phenotyping

Friday, 2018/05/11 | 07:56:33

|

|

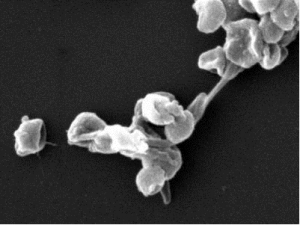

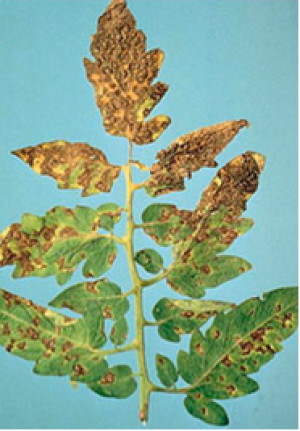

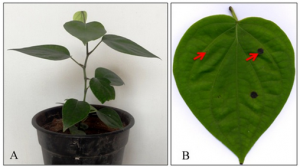

Sambuddha Ghosal, David Blystone, Asheesh K. Singh, Baskar Ganapathysubramanian, Arti Singh, and Soumik Sarkar PNAS May 1 2018; vol. 115 (18) 4613-4618 SignificancePlant stress identification based on visual symptoms has predominately remained a manual exercise performed by trained pathologists, primarily due to the occurrence of confounding symptoms. However, the manual rating process is tedious, is time-consuming, and suffers from inter- and intrarater variabilities. Our work resolves such issues via the concept of explainable deep machine learning to automate the process of plant stress identification, classification, and quantification. We construct a very accurate model that can not only deliver trained pathologist-level performance but can also explain which visual symptoms are used to make predictions. We demonstrate that our method is applicable to a large variety of biotic and abiotic stresses and is transferable to other imaging conditions and plants. AbstractCurrent approaches for accurate identification, classification, and quantification of biotic and abiotic stresses in crop research and production are predominantly visual and require specialized training. However, such techniques are hindered by subjectivity resulting from inter- and intrarater cognitive variability. This translates to erroneous decisions and a significant waste of resources. Here, we demonstrate a machine learning framework’s ability to identify and classify a diverse set of foliar stresses in soybean [Glycine max (L.) Merr.] with remarkable accuracy. We also present an explanation mechanism, using the top-K high-resolution feature maps that isolate the visual symptoms used to make predictions. This unsupervised identification of visual symptoms provides a quantitative measure of stress severity, allowing for identification (type of foliar stress), classification (low, medium, or high stress), and quantification (stress severity) in a single framework without detailed symptom annotation by experts. We reliably identified and classified several biotic (bacterial and fungal diseases) and abiotic (chemical injury and nutrient deficiency) stresses by learning from over 25,000 images. The learned model is robust to input image perturbations, demonstrating viability for high-throughput deployment. We also noticed that the learned model appears to be agnostic to species, seemingly demonstrating an ability of transfer learning. The availability of an explainable model that can consistently, rapidly, and accurately identify and quantify foliar stresses would have significant implications in scientific research, plant breeding, and crop production. The trained model could be deployed in mobile platforms (e.g., unmanned air vehicles and automated ground scouts) for rapid, large-scale scouting or as a mobile application for real-time detection of stress by farmers and researchers.

See: http://www.pnas.org/content/115/18/4613

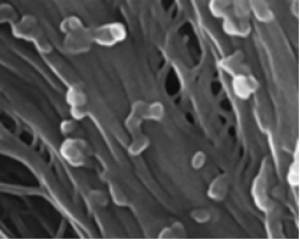

Figure 1: Schematic illustration of foliar plant stresses in soybean grouped into two major categories, biotic (bacterial and fungal) and abiotic (nutrient deficiency and chemical injury) stress. The images were used to develop the DCNN for the following eight stresses: bacterial blight (Pseudomonas savastanoi pv. glycinea), bacterial pustule (Xanthomonas axonopodis pv. glycines), sudden death syndrome (SDS, Fusarium virguliforme), Septoria brown spot (Septoria glycines), frogeye leaf spot (Cercospora sojina), IDC, potassium deficiency, and herbicide injury. For each stress, information such as symptom descriptors, areas of appearance, and most commonly mistaken stresses that exhibit similar symptoms are listed. These particular foliar stresses were chosen because of their prevalence and confounding symptoms. |

|

|

|

[ Other News ]___________________________________________________

|

Curently online :

Curently online :

Total visitors :

Total visitors :

(32).png)